List Files

- v5.2.38 and later

The List Files task is used to retrieve a list of files from a specific location. The task determines the source type from the URL scheme and lists all files at that location. It supports cloud storage buckets, Git repositories, and website sitemaps.

During execution, the task detects the input type from the URL scheme. For example, a URL starting with s3:// is treated as an AWS S3 bucket, and a URL that begins with https://github.com/ is treated as a GitHub repository. The task lists files (filtered by file type if provided) and returns the absolute paths as an array. If you specify an output location, the task also writes the list to that location.

If the location of the file is not publicly available, you must create an appropriate integration with the required access keys or tokens. Integrate the following with Orkes Conductor, depending on your source:

Task parameters

Configure these parameters for the List Files task.

| Parameter | Description | Required/ Optional |

|---|---|---|

| inputParameters.inputLocation | The location of the files to be listed. Example based on the integration type:

| Required. |

| inputParameters.integrationName | If the location of the file to be listed is not publicly available, select the integration name of the Git Repository or Cloud Providers integrated with your Conductor cluster. Note: If you haven’t configured any integration on your Orkes Conductor cluster, go to the Integrations tab and configure the Git Repository or required Cloud Providers. | Optional. |

| inputParameters.fileTypes | The file types to be listed. If omitted, all file types are included. Supported values:

| Optional. |

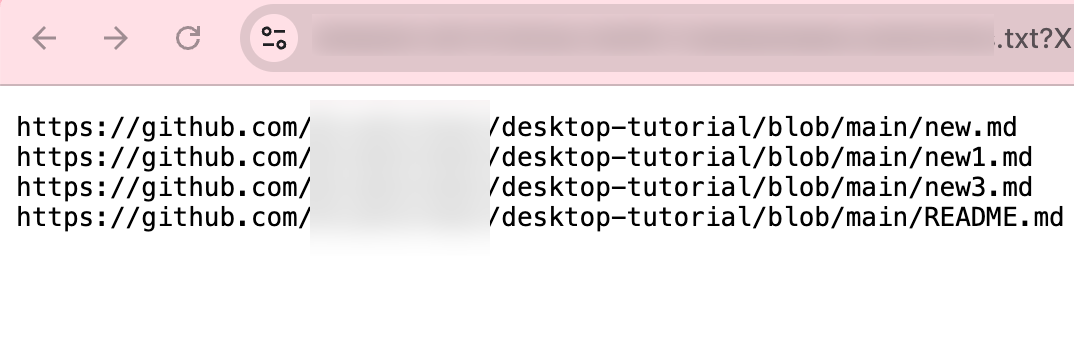

| inputParameters.outputLocation | The storage location where the resulting file list is saved as a text file, with each line in the text file containing one absolute file path. For example:

Note: Cloud storage output requires a corresponding integration with write permissions. Use integrationNames parameter (e.g., {"aws": "my-integration"}) to add any additional integration configurations. | Optional. |

| inputParameters.integrationNames | A key-value map of integration types and names. Use this when multiple integrations are needed. The key represents the type of integration (for example, git, aws, gcp, hubspot), and the value specifies the name of the corresponding integration. | Optional. |

The following are generic configuration parameters that can be applied to the task and are not specific to the List Files task.

Other generic parameters

Here are other parameters for configuring the task behavior.

| Parameter | Description | Required/ Optional |

|---|---|---|

| optional | Whether the task is optional. If set to true, any task failure is ignored, and the workflow continues with the task status updated to COMPLETED_WITH_ERRORS. However, the task must reach a terminal state. If the task remains incomplete, the workflow waits until it reaches a terminal state before proceeding. | Optional. |

Task configuration

This is the task configuration for a List Files task.

{

"name": "list_files",

"taskReferenceName": "lf",

"type": "LIST_FILES",

"inputParameters": {

"inputLocation": "<YOUR-LOCATION>",

"fileTypes": ["<YOUR-FILE-TYPE>"]

}

}

Task output

The List Files task will return the following parameters.

| Parameter | Description |

|---|---|

| files | An array of absolute file paths listed from the input location. |

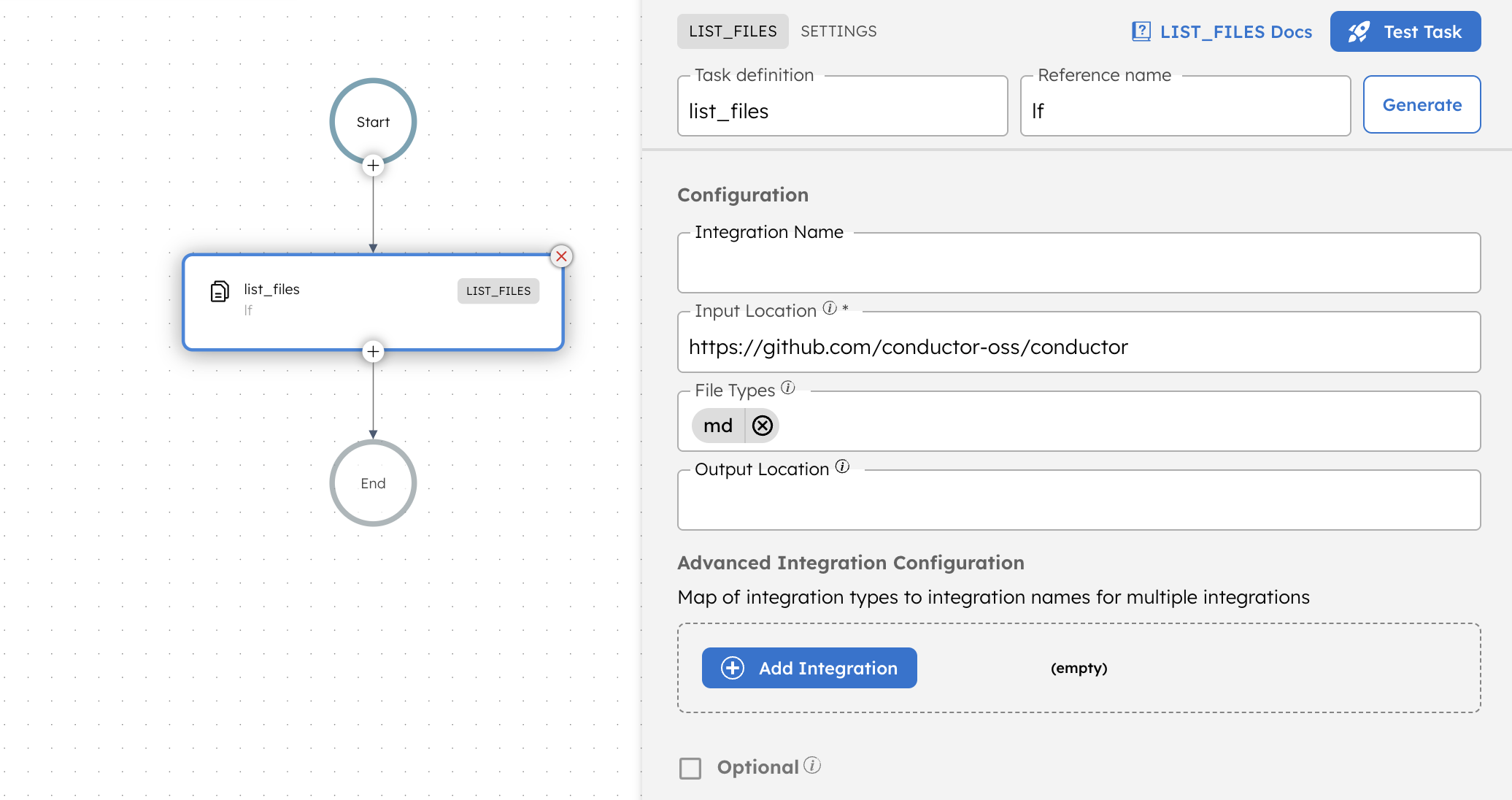

Adding a List Files task in UI

To add a List Files task:

- In your workflow, select the (+) icon and add a List Files task.

- In Input Location, enter the location of the files to be listed.

- (Optional) For private URLs, in Integration Name, select the integration already added to the cluster from where the files are to be listed.

- (Optional) In File Types, select the file type to be listed. Select all or leave blank to include all types.

- (Optional) In Output Location, enter an output location to store the list of files as a text file.

- (Optional) In Advanced Integration Configuration, select + Add Integration when multiple integrations are needed. The key represents the type of integration (for example, git, aws, gcp), and the value specifies the name of the corresponding integration.

Examples

Here are some examples for using the List Files task.

Using List Files task

To illustrate the use of the List Files task, consider the following workflow that lists all Markdown (.md) files from a public GitHub repository.

To create a workflow definition using Conductor UI:

- Go to Definitions > Workflow, from the left navigation menu on your Conductor cluster.

- Select + Define workflow.

- In the Code tab, paste the following code:

Workflow definition:

{

"name": "list_files_demo",

"description": "Simple test workflow for LIST_FILES",

"version": 1,

"tasks": [

{

"name": "list_files",

"taskReferenceName": "lf",

"inputParameters": {

"inputLocation": "https://github.com/conductor-oss/conductor",

"fileTypes": [

"md"

],

"integrationNames": {},

"outputLocation": ""

},

"type": "LIST_FILES"

}

],

"schemaVersion": 2

}

- Select Save > Confirm.

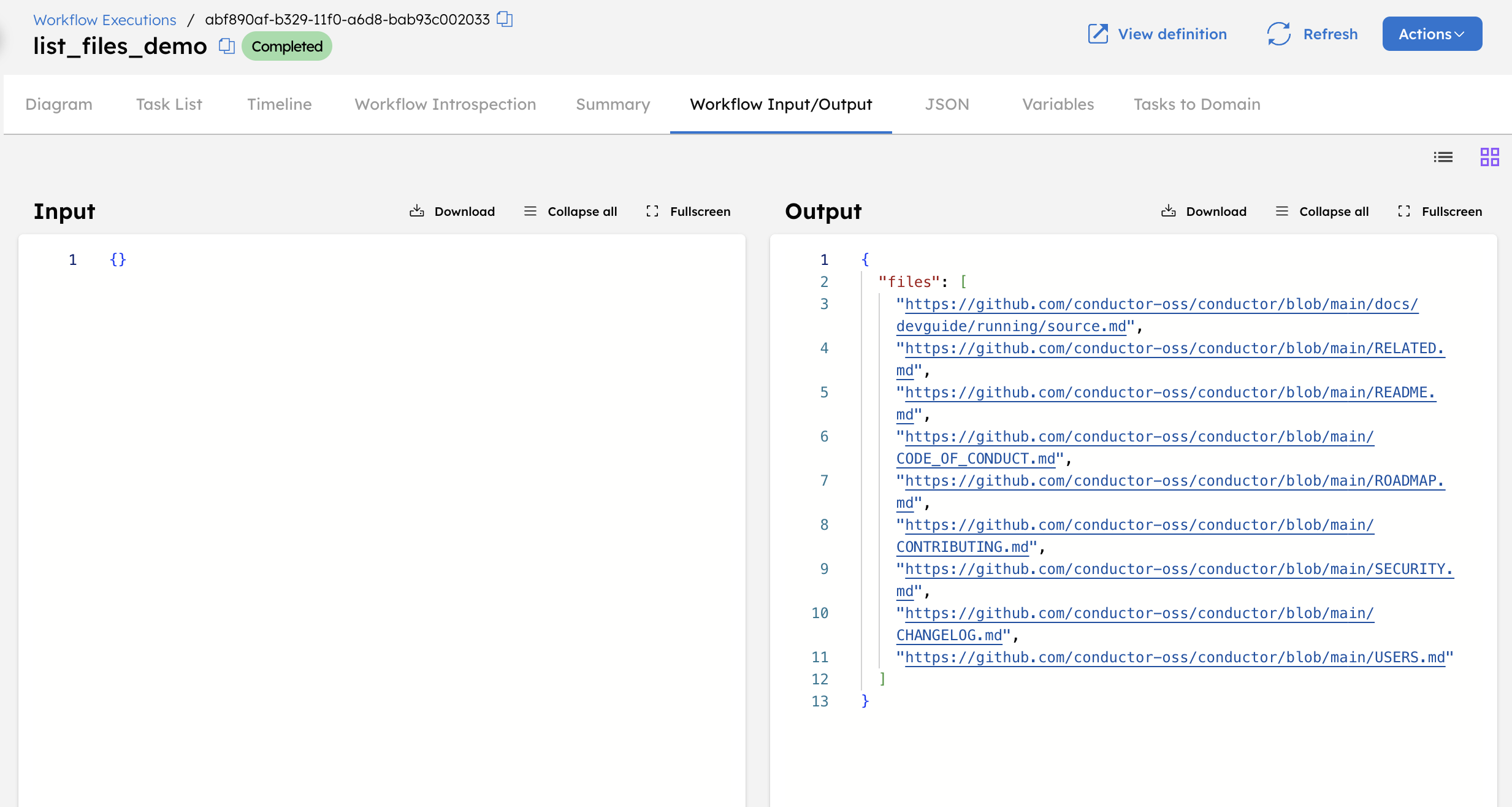

Let’s execute the workflow using the Execute button.

When executed, this workflow connects to the specified GitHub repository, identifies all files with the .md extension, and returns a list of their absolute paths.

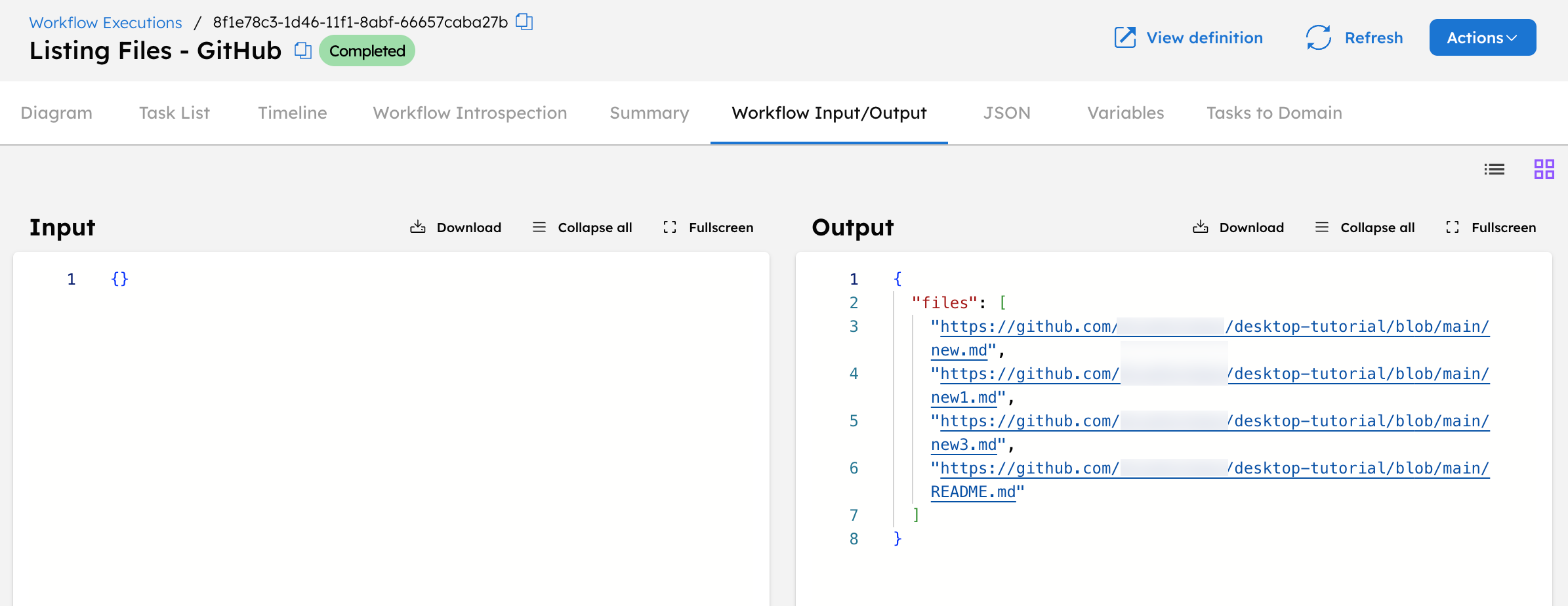

The task output contains a key named files, which stores an array of file URLs retrieved from the repository.

After successful execution, the List Files task produces the following output:

Each element in the files array represents one file path from the input location. These can be used directly by downstream tasks for further processing.

List files from a private GitHub repository

In this example, we will:

- Create a Git Repository integration in Orkes Conductor.

- Create a workflow that uses the List Files task.

- Run the workflow and verify the output.

Step 1: Create a Git Repository integration in Orkes Conductor

Create a Git Repository integration using a personal access token with read access to the private repository from which the files are to be listed.

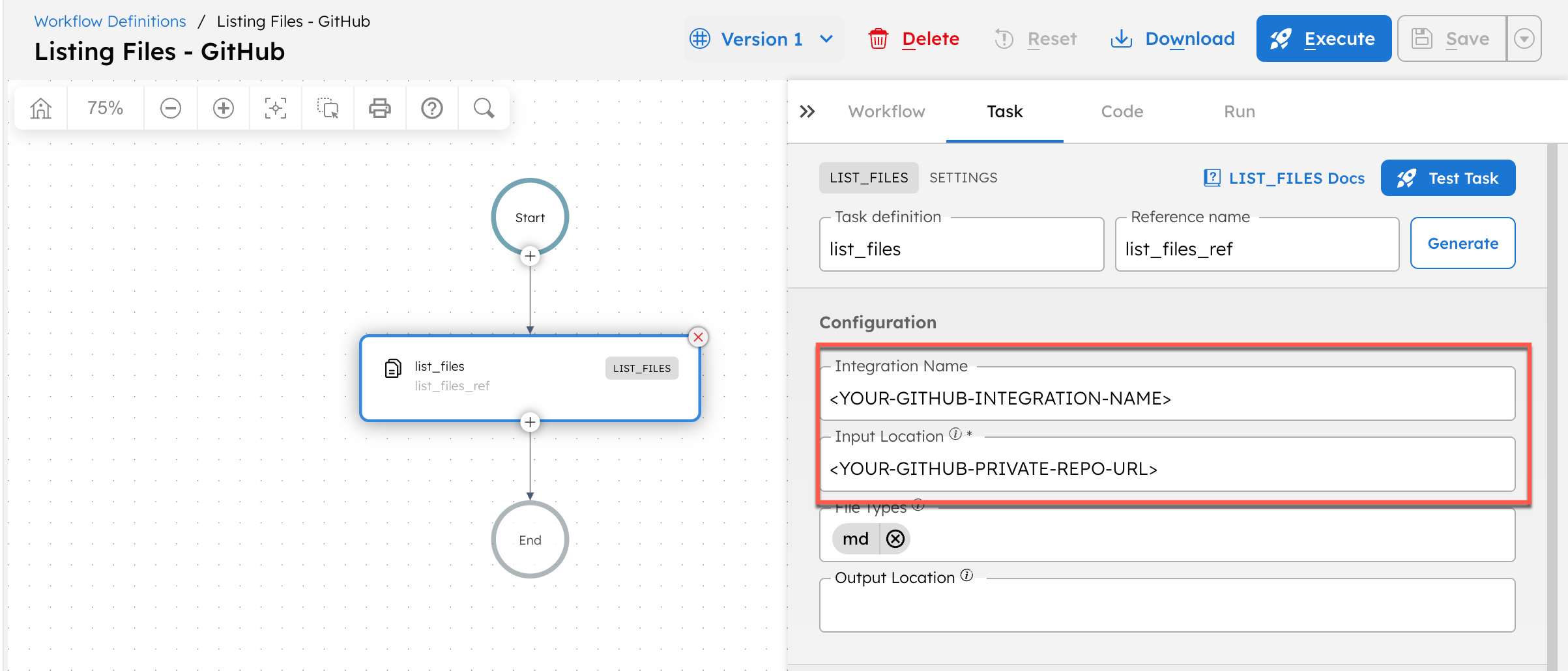

Step 2: Create a workflow in Orkes Conductor

To create a workflow using Conductor UI:

- Go to Definitions > Workflow from the left navigation menu on your Conductor cluster.

- Select + Define workflow.

- In the Code tab on the right panel, paste the following code:

{

"name": "Listing Files - GitHub",

"description": "Workflow to list files from a private GitHub repository.",

"version": 1,

"tasks": [

{

"name": "list_files",

"taskReferenceName": "list_files_ref",

"inputParameters": {

"inputLocation": "<YOUR-GITHUB-PRIVATE-REPO-URL>",

"fileTypes": [

"md"

],

"integrationName": "<YOUR-GITHUB-INTEGRATION-NAME>"

},

"type": "LIST_FILES"

}

],

"schemaVersion": 2

}

- Replace

<YOUR-GITHUB-PRIVATE-REPO-URL>with your private repository URL and<YOUR-GITHUB-INTEGRATION-NAME>with the integration name created in Step 1.

- Select Save > Confirm.

Step 3: Run the workflow and verify the output

Select the Execute button from the workflow definition page. This takes you to the workflow execution page. Once the workflow is successfully completed, select the Workflow Input/Output to verify the output.

The output will contain a files array with the absolute paths of all listed .md files.

List files from a private GitHub repository and store them in an AWS S3 bucket

In this example, we will:

- Create a Git Repository integration in Orkes Conductor.

- Create an AWS integration in Orkes Conductor.

- Create a workflow that uses the List Files task.

- Run the workflow and verify the output.

Step 1: Create a Git Repository integration in Orkes Conductor

Create a Git Repository integration using a personal access token with read access to the private repository from which the files are to be listed.

Step 2: Create an AWS integration in Orkes Conductor

Create an AWS integration with the connection type as Access Key/Secret. Ensure that the access key has write access to the S3 bucket. Note the S3 URI where the output text file will be stored using the List Files task.

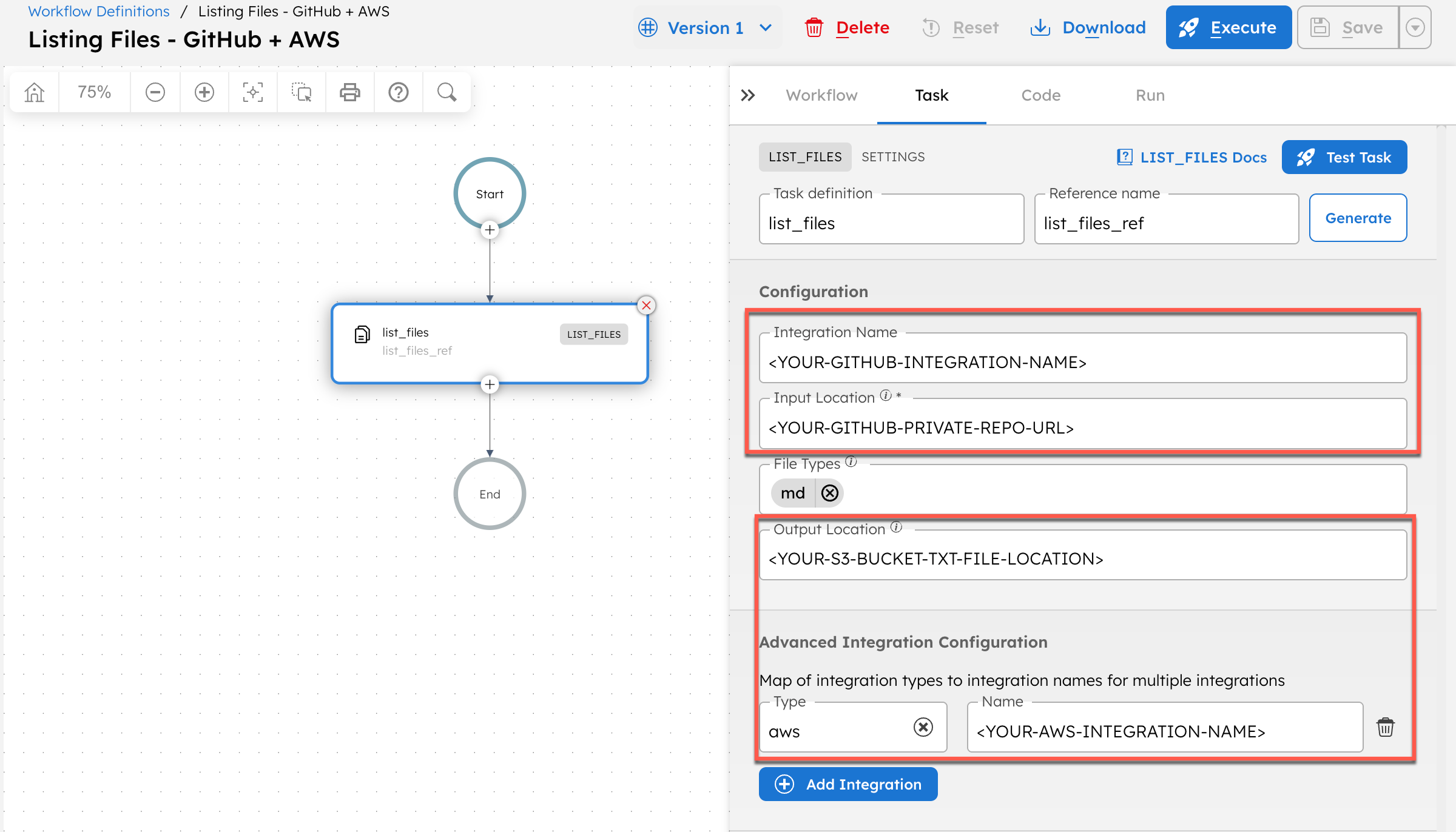

Step 3: Create a workflow in Orkes Conductor

To create a workflow using Conductor UI:

- Go to Definitions > Workflow from the left navigation menu on your Conductor cluster.

- Select + Define workflow.

- In the Code tab on the right panel, paste the following code:

{

"name": "Listing Files - GitHub + AWS",

"description": "Workflow to list files from a private GitHub repository and store them in a private AWS S3 bucket text file.",

"version": 1,

"tasks": [

{

"name": "list_files",

"taskReferenceName": "list_files_ref",

"inputParameters": {

"inputLocation": "<YOUR-GITHUB-PRIVATE-REPO-URL>",

"fileTypes": [

"md"

],

"integrationName": "<YOUR-GITHUB-INTEGRATION-NAME>",

"outputLocation": "<YOUR-S3-BUCKET-TXT-FILE-LOCATION>",

"integrationNames": {

"aws": "<YOUR-AWS-INTEGRATION-NAME>"

}

},

"type": "LIST_FILES"

}

],

"schemaVersion": 2

}

- Replace the following:

<YOUR-GITHUB-INTEGRATION-NAME>with the integration name created in Step 1.<YOUR-GITHUB-PRIVATE-REPO-URL>with your private repository URL from which files are to be listed.<YOUR-S3-BUCKET-TXT-FILE-LOCATION>with the S3 URI. For example:s3://<YOUR-BUCKET-NAME>/<TXT-FILE-NAME>.txt.<YOUR-AWS-INTEGRATION-NAME>with the AWS integration name created in Step 2.

- Select Save > Confirm.

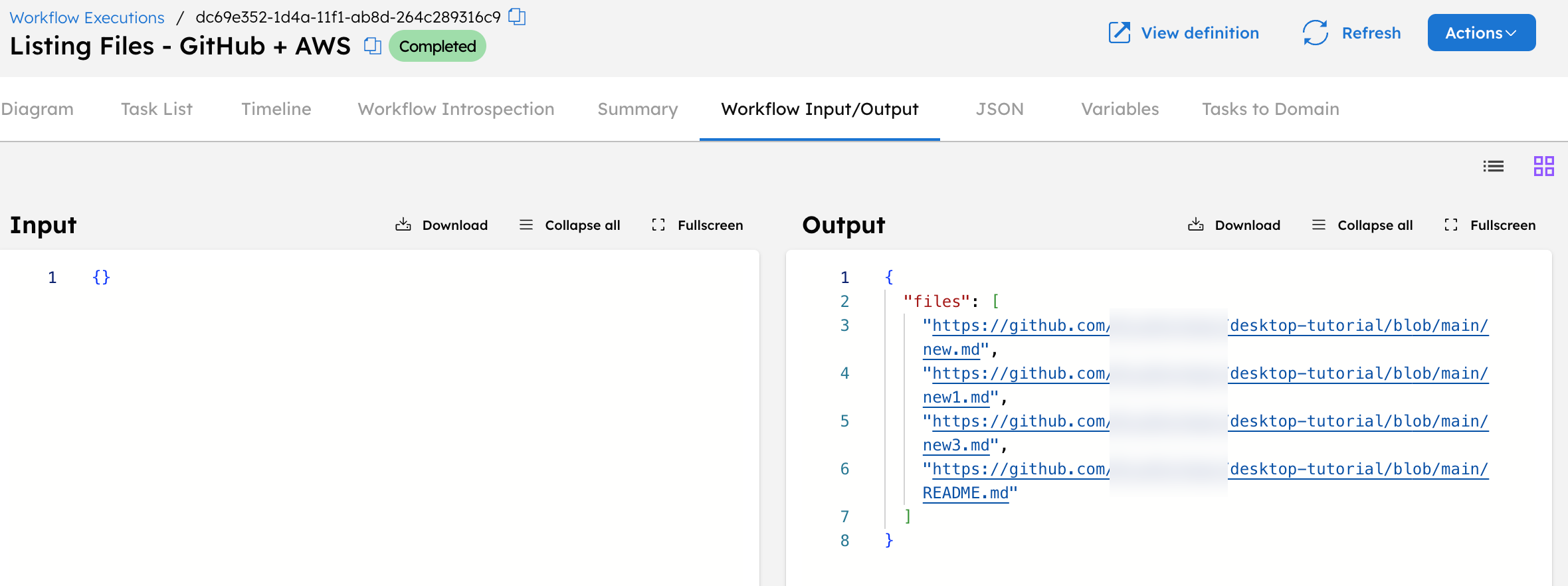

Step 4: Run the workflow and verify the output

Select the Execute button from the workflow definition page. This takes you to the workflow execution page.

When executed, this workflow connects to the specified GitHub repository, lists all .md files, and writes the file list to an S3 bucket. Each line in the file contains one absolute file path.

Once the workflow is successfully completed, select the Workflow Input/Output to verify the output. The task output contains a key named files, which stores an array of file URLs retrieved from the repository.

Accessing the S3 bucket confirms that the output text file has been written, with each line containing one absolute file path.